-

My Favourite Albums of 2018

Spotify recently released their 2018-Wrapped playlists for users. It’s a brilliant feature that I wish more services would implement. Twice in the past I’ve used Apple Music for a few months at a time. Both times I regretted it, and was then annoyed when my year-end stats missed my binge on a new album. It’s an ego-filling service whose greatest purpose is for enabling you to tell others about how good your music taste is. But, who doesn’t love to be told about a band that you never listen to until you discover them yourself.

I thought I’d write a little about my favourite releases of this year.

New Light (Single), John Mayer

My first choice is only one song, but it’s a really great one. This song reminds of Los Angeles evenings, I think it’s got a very

La La Landvibe but can’t quite place where I get that from.Leon Bridges, Good Thing

This album is a significant departure from the first Leon Bridges’ early Rhythm and Blues style. I particularly like

Bad Bad NewsandMrs.Hill Climber, Vulfpeck

Squeezing in at the very end of the year, I haven’t turned this off since it came out. It’s maybe too early days but I think it’s my favourite Vulfpeck album yet. It’s an album I’d highly recommend listening to from start to finish.

Guide My Back Home (Live), City and Color

I don’t usually like live albums much but this one is different. Dallas Green has developed so much as a musician that it’s amazing to hear songs that I grew up listening to (that now seem dated, for want of a better word) performed in a more mature and emotive set.

Living Room, Lawrence

Lawrence are a pop-funk band whose songs feel like a disney soundtrack. They are fronted by a brother-sister duo with amazing voices. I’m hoping they come across to the UK sometime as I’d love to see them live.

Tom Misch, Geography

Tom Misch has become my current guitar idol. Every song on this album is brilliant.

-

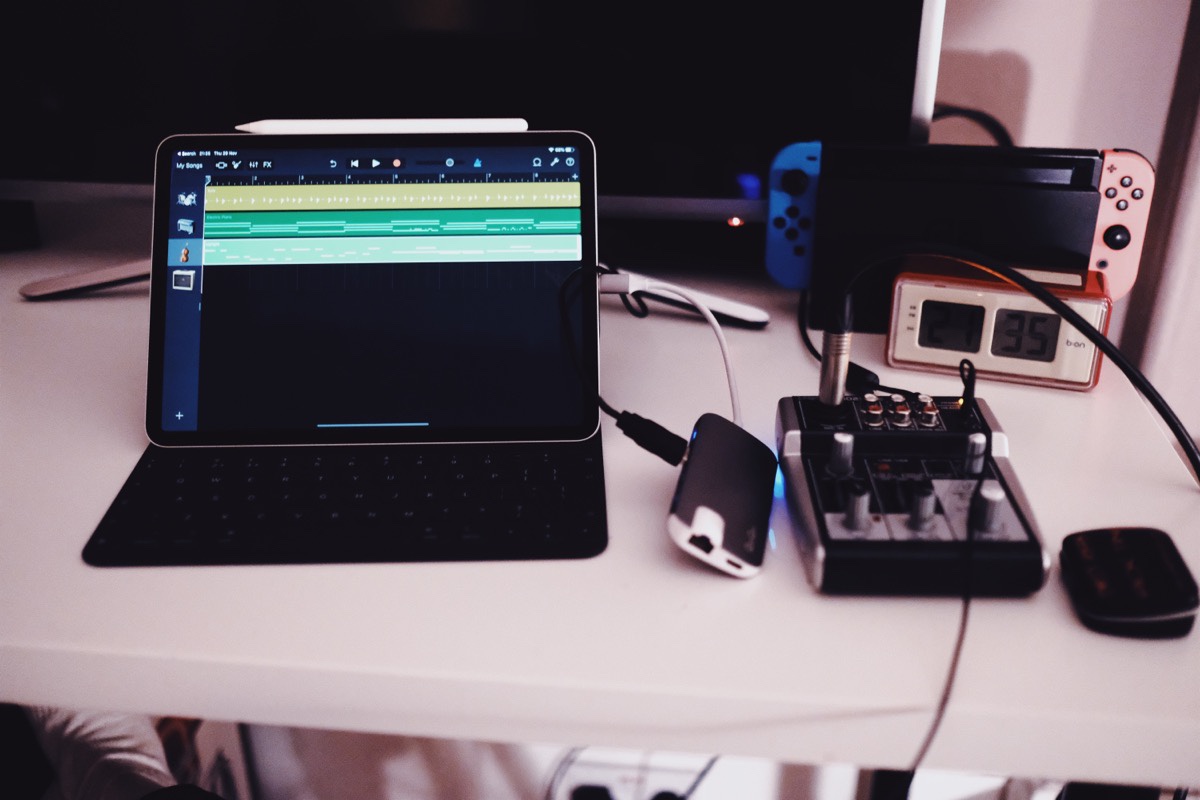

iPad Pro

-

Podcast List - November 2018

A November edition of some podcasts that I’ve been listening to recently.

Podcasts

Dirty John

An interesting short series that best falls into the ‘true crime’ category. I don’t want to say too much about it but would recommend for people who like anything like “They Walk Among Us” or “Up and Vanished”.

The Sourdough Podcast

I first heard about this podcast when I Maurizio of The Perfect Loaf was on it. I wouldn’t say you learn a tonne from it but it’s pretty interesting for those who are into baking.

Tools They Use

A podcast that interviews people about their daily tech and daily workflows. I’m a sucker for any tech podcasts so this has become another to add to the pile.

West Cork

One of the best true crime podcast series I’ve heard. Sadly only available on Audible, however if you haven’t signed up before, you can get a free trial and cancel it after you’ve heard the podcast.

Planet Money’s The Indicator

A spin off show from my previously listed ‘Planet Money’, this is a podcast served in ~10 minute episodes that have short investigations of different parts of the economy - far more interesting than it sounds.

Up and Vanished

I had Up and Vanished in my first podcast list, however there is a second season available now that focuses on the disappearance of a woman from a hippie cult in Colorado. I don’t think it’s nearly as good as the first season, there’s far more fluff and filler, but it’s interesting nonetheless.

-

New Favicon

Last week I sold my laptop and received delivery of the new iPad Pro. I’ve a bunch of posts I want to make about the iPad, but here’s a short one for now.

I’ve been meaning to add a favicon for this blog for a while, but just never got round to it. So, this morning, whilst playing around in Procreate, I decided to draw one up. I wanted some depiction of a pizza, however all the references that I could find online were certainly not Neapolitan (the undisputed best form of pizza), so, I made my own. Here is the process as exported from Procreate - a really cool feature that lets you export a time lapse of your drawing.

Yeah

-

Presenting a series of UIAlertControllers with RxSwift

I was recently writing a helper application for work that would store a bunch of URL paths that we support as Deeplinks. The user can pick from one of these paths and will be prompted to input a value for any parameters (any part of the path that matched a specific regex). However, the app got pretty complex when having to deal with multiple capture groups in that regular expression.

The problem was this - given an array of strings (capture groups from my regular expression), show an alert one after the other requesting a value that the user wishes to input for that ‘key’.

Traditionally, I would have probably made some

UIAlertController-controller that might look something like this.protocol AlertPresenting: class { func present(alert: UIViewController) } protocol MultipleAlertControllerOutput: class { func didFinishDisplayingAlerts(withChoices choices: [(String, AlertViewModel)]) } class MultipleAlertController { private var iterations = 0 private var viewModels = [AlertViewModel]() private var results = [(String, AlertViewModel)]() private weak var alertPresenter: AlertPresenting? weak var output: MultipleAlertControllerOutput? func display(alerts alertViewModels: [AlertViewModel]) { self.iterations = 0 self.results = [] self.viewModels = alertViewModels self.showNextAlert() } private func showNextAlert() { guard viewModels.indices.contains(self.iterations) else { self.output?.didFinishDisplayingAlerts(withChoices: self.results) return } self.display(alert: viewModels[self.iterations]) } private func display(alert: AlertViewModel) { // some stuff here showing alert, invoking show next alert after completion iterations += 1 } }Now this isn’t necessarily bad, but having to keep track of or reset state can always lead to bugs - particularly in asynchronous situations. Instead I had an idea for doing this using RxSwift.

import RxSwift func populateParameters(for alertViewModels: [AlertViewModel], presentingAlertFrom viewController: ViewControllerPresenting?) -> Observable<[RegexReplacement]> { return Observable .from(alertViewModels) .concatMap { alertViewModel -> Observable<(String, AlertViewModel)> in guard let vc = viewController else { return Observable.empty() } let textFieldResponse: Observable<String> = vc.show(textFieldAlertViewModel: alertViewModel) return textFieldResponse .map { textInputValue in (textInputValue, alertViewModel) } } .toArray() }// Helper method for Rx UIAlertControllers protocol ViewControllerPresenting: class { func present(viewController: UIViewController) } extension ViewControllerPresenting { func show(textFieldAlertViewModel: AlertViewModel) -> Observable<String> { return Observable.create { [weak self] observer in let alert = UIAlertController(title: textFieldAlertViewModel.title, message: textFieldAlertViewModel.message, preferredStyle: .alert) alert.addAction(.init(title: "Cancel", style: .cancel, handler: { _ in observer.onCompleted() })) alert.addAction(.init(title: "Submit", style: .default, handler: { _ in observer.onNext(alert.textFields?.first?.text ?? "") observer.onCompleted() })) alert.addTextField(configurationHandler: { _ in }) self?.present(viewController: alert) return Disposables.create { alert.dismiss(animated: true, completion: nil) } } } }Now, this looks like a lot of code, but the lower part is simply a wrapping on UIAlertController with Rx. Something that can be re-used for any UIAlertController with a text field.

Let’s break down the main bits.

Observable.from(alertViewModels)This little snippet takes an array an gives you an observable that emits each element of the array. In this example that will be an event for event match in the regular expression.

.concatMap { alertViewModel -> Observable<(String, AlertViewModel)> in guard let vc = viewController else { return Observable.empty() } let textFieldResponse: Observable<String> = vc.show(textFieldAlertViewModel: alertViewModel) return textFieldResponse .map { textInputValue in (textInputValue, alertViewModel) } }Here we take the stream of matches for the regular expression and we

compactMapan observable of the String that the user typed into a UIAlertController alongside the original AlertViewModel instance. The RxSwift method doing the heavy lifting is thecompactMap. It waits for a completed event to be sent by the observable text field responses before invoking the next alert.The result of the

compactMapis an Observable tuple of(String, AlertViewModel), which can be used by the consumer to populate the matches of the regex with the String values.We pipe this tuple Observable into a

toArraywhich waits for a completed event - which happens automatically for us once every item in the array has had an alert shown.I’ve cut a bunch of corners in these examples in order to simplify flow and make these two approaches more comparable. You can see the full example in my deeplinking helper app here.

-

Rab Microlight Alpine

I recently purchased the Rab Microlight Alpine down jacket. Like with my Arcteryx jacket, I thought it might be helpful to others if I shared my opinions and some pictures as there’s limited info or pictures outside of those by the manufacturer online.

I chose this garment to replace a North Face Thermo Ball jacket that my brother has adopted to be his own. The Rab number uses real down and is noticeably warmer than the synthetic insulation of the North Face. That being said, the Rab fits slimmer which certainly helps to keep the warm in.

The jacket comes branded with a small Nikwax - the waterproofing company - logo printed at the back. The down used in the jacket is claimed to be ‘hydrophobic’, but I’m convinced this is just marketing chat. I don’t how the material /inside/ the jacket can really keep you dry, but I guess it’s better than nothing?

Like the Arcteryx, this jacket has both a good hood and a soft microfibre-like material on the inside of the zip. There’s even a small sleeping bag like case that Rab provide for you to pack the jacket away into. Some jackets I have tried have the ability to pack away into their own pockets which defeats the need to keep track of a little bag that I will inevitably lose.

The jacket doesn’t pack away /that/ small but it’s so light that you don’t notice on your person that much.

I went for size Medium and I’m happy with the fit. I’m just shy of 6’2. Feel free to send me any questions, I’ll try and remember to add more pictures when I get some.